Last weekend, following Question Cathy's lead, I reached the "signed up for a free trial of Ancestry.com" level of cabin fever. Don't worry, I canceled in time.

It wasn't that I found the process uninteresting. There simply wasn't that much to learn. I confirmed what I already knew: everyone in my lines of ancestry is from Poland. And I mean everyone. There's none of this "Well I'm 1/16 Irish" stuff.

online pharmacy temovate best drugstore for you

It's all Poland.

Additionally, there is nothing to be found much earlier than 1900. The paper trail starts when they arrived in the U.S. as immigrants. The documents (Army draft cards, immigration records, passports, naturalization paperwork, etc.) reflect the root of the problem – each person has a different birth date on almost every document. These were illiterate or barely literate people. They didn't even know with certainty their own birth dates, which was not uncommon in that era. And they inhabited a part of the world where written records that survived are not exactly ample. Some European countries like the U.

buy propecia online blackmenheal.org/wp-content/languages/new/us/propecia.html no prescription

K. seem to have a long and well-preserved tradition of records. Poland, which was overrun and traded among rival European powers for centuries, does not.

The other unsurprising find is that I descend from lines of entirely unremarkable people for the most part. I suppose everyone does genealogy hoping and expecting to find interesting stories or rich and famous long-lost relatives. I didn't harbor any illusions, but it's incredible the extent to which everyone prior to my dad (who was born in the U.S. and went to college) we were all…peasants, I guess. Because that's what the overwhelming majority of human history has been – anonymous people living anonymous lives trying to fend off death long enough to reproduce. My ancestors did what almost everyone's ancestors did for generations. They worked with their hands and their lower backs and aside from children they left essentially nothing to indicate that they ever lived.

buy cymbalta online blackmenheal.org/wp-content/languages/new/us/cymbalta.html no prescription

But here's the interesting part.

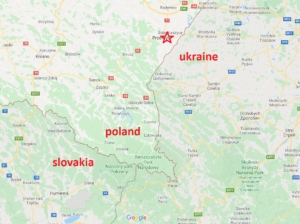

Many key people from my father's lineage hail from a place I'd never heard of called Bolestraszyce in southeastern Poland. I looked it up on a map.

Ethnicity and nationality are funny things. For all the family members who lived long enough for me to meet them, our ethnic identity as Poles has been extremely important to them. Polacks tend not to broadcast it in the same way that, say, Irish or Italian Americans do (like on t-shirts, for example) but like anyone else they seem to consider it a core part of their identity. And looking at that map, I can't help but laugh a little at how silly it is. Had they been born a few miles to the east, everything they ever felt about being Polish would have been Ukrainian. A little farther south and it would have been generations of Slovak pride.

Now, I know the borders in that area of the world have shifted around a lot, and there is more to the concept of an ethnic identity than to a national one. Plenty of people are living in X despite being Ethnic Y's. I just think about all the minor changes that could have taken what I believe is a long line of people in that area a few miles one way or the other. Would I feel any differently about myself if I were the exact same person, but "Ukrainian"?

Probably? I don't know. My guess is that whatever need ethnic identity fulfills for us psychologically can work regardless of which identity is involved. The cultural cues are different (Ukrainian churches are…something else) but fundamentally all of these people would have been the same. Jog the border a little bit one way or the other on a piece of land in modern Poland that has been Germany, Russia, Austria-Hungary, and a half dozen other nation-states that no longer exist and I can't imagine that the long-term outcomes across generations would matter much.

Perhaps the biggest difference would have been in immigration patterns. European immigrants famously had preferences for going where their ethnic predecessors went. Chicago was heavy on Poles and Slavic people in general. Ukrainians appear to have preferred staying on the East Coast, in the New York to Philadelphia belt. So maybe they wouldn't have gone to Chicago, and thereby everything would be different for me. Maybe I wouldn't even be here. But holding all else constant (I know, I know, it's a hypothetical) I can't imagine feeling fundamentally different about myself if I found out I was descended from people born on the opposite side of the metaphorical street.